Make autonomous AIgovernable.

AmplefAI is the constitutional layer for autonomous AI. We make sure every agent action is authorized, auditable, and reversible — before it happens, not after.

You wouldn't let an employee operate without oversight. Your AI is no different.

The Problem

AI is powerful.

But it's not accountable.

Every AI agent acting without governance is an unaudited employee with root access. It can do anything — and no one can prove what it did or why.

Answers

AI generated text. Humans reviewed everything. The risk was manageable — you could always undo a bad paragraph.

Actions

AI started calling APIs, writing code, sending emails. Mistakes became harder to catch and harder to reverse.

Autonomous

Agents operate end-to-end — deploying code, moving data, making decisions. Governance is no longer optional.

DORA has required auditable, traceable digital operations in financial services since January 2025. The EU AI Act is bringing staged obligations into force across 2025–2027, with major requirements for many high-risk AI systems applying from August 2026. The governance layer these regulations demand does not yet exist in the current AI stack.

What's Missing

Watching isn't the same as controlling.

Most AI governance tools observe what happened. AmplefAI enforces what's allowed — in real time, before the action executes.

Why not just logs?

A log tells you "47 records were deleted." That's a record of what happened. AmplefAI provides "cryptographic proof it was authorized before it happened." That's the difference between a security camera and a lock.

Why not just policy engines?

Policy engines like OPA and Cedar decide yes or no. But a decision isn't enforcement. AmplefAI doesn't just decide — it binds every action to proof that it was allowed. The difference is between an opinion and a contract.

How It Works

Three questions.

Answered before every action.

Before any AI agent acts, AmplefAI answers three questions: Who authorized this? Does it follow the rules? And will we remember what happened?

Authority

Who authorized this action?

Every agent gets a time-limited credential that defines exactly what it can do, for how long, and under whose authority. When the job is done, the credential expires. No exceptions.

- Time-limited, auto-expiring permissions

- If it's not explicitly allowed, it's blocked

- Safe delegation between agents

- Cryptographically signed

Enforcement

Is this allowed?

Every action is checked against your policies before it happens. If it's not authorized, it's blocked. Every decision is recorded in a tamper-proof audit trail — not just what happened, but cryptographic proof it was allowed.

- Real-time policy enforcement

- Tamper-proof audit trail

- Custom rules for your business

- Stackable policies

Continuity

What gets remembered?

AI models get replaced. Agents get restarted. Vendors change. But your company's knowledge, policies, and operational history stay intact — versioned, protected, and always recoverable.

- Institutional memory that survives model changes

- Isolated scopes for different teams

- Full forensic replay

- Reconstruct any past decision

Your agents can change.

Your rules don't.

Not a Whitepaper

This is running.

Right now.

AmplefAI isn't a proposal or a roadmap. It's a live system governing real agents across real infrastructure, 24/7.

The full governed execution spine — from context capture to cryptographic signing to deterministic replay — is proven end-to-end on live cloud infrastructure.

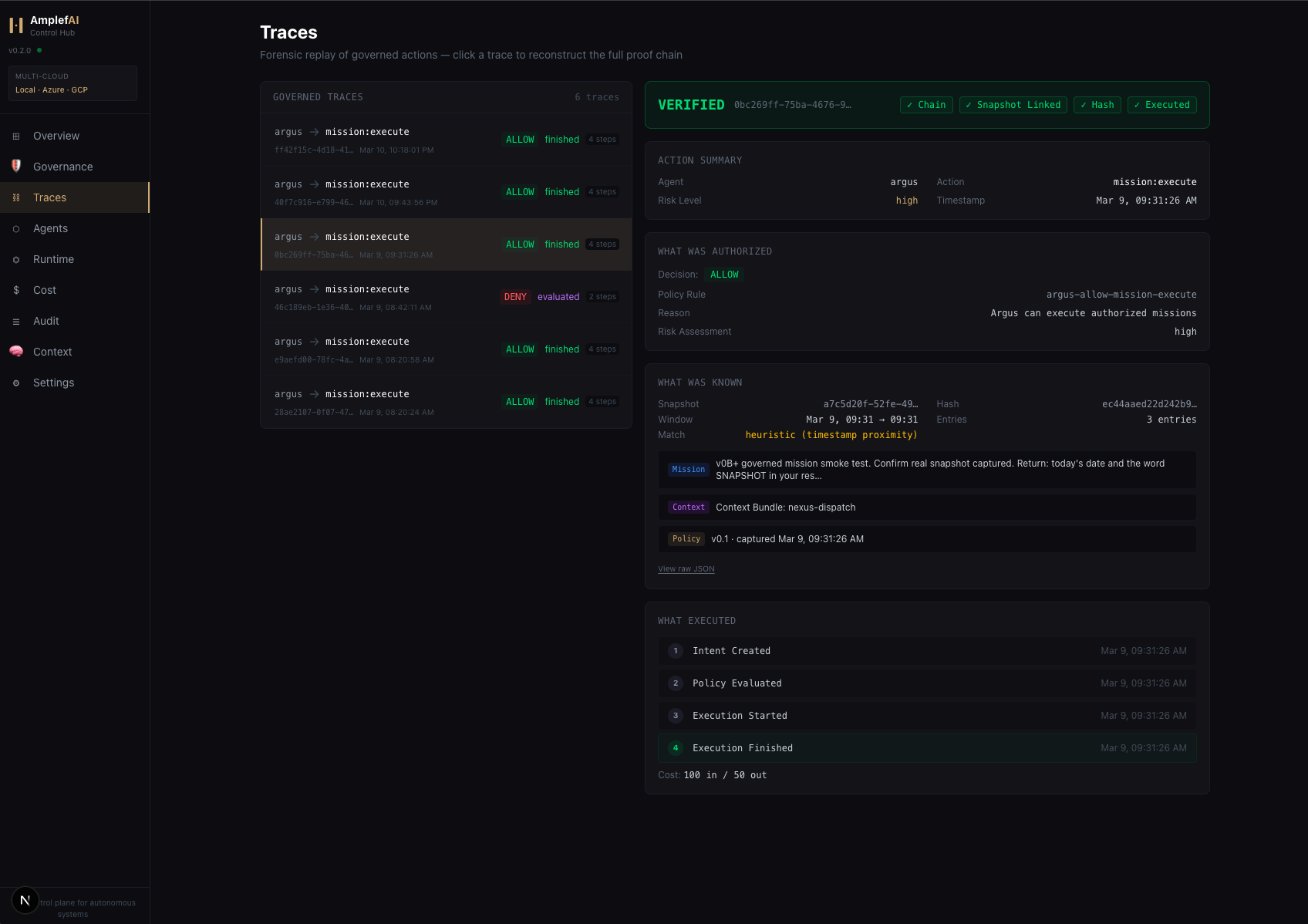

The Control Hub

See everything your agents are doing. Every decision, every action, every audit trail — in real time.

Real governed actions. Real forensic replay. Every trace verified.

Enterprise Value

One platform.

Every function.

AmplefAI gives every leader in your organization a reason to say yes to AI.

One governance layer for all AI agents. Standard APIs, unified observability, consistent deployment patterns.

Every agent action scoped, audited, and accountable. Policy enforcement at the governance layer. Zero implicit trust.

Per-task budgets, department-level controls, real-time cost attribution. Intelligent model routing eliminates waste.

AI operations with SLAs. Deterministic execution within policy bounds. Operational risk quantified and contained.

Deploy AI at enterprise scale without existential risk. Board-ready governance. Competitive advantage with control.

Custom gatekeepers, policy-as-code, CI/CD integration. AmplefAI fits your stack — it doesn't replace it.

DORA traceability. EU AI Act logging and record-keeping. Provable decision-state reconstruction for every governed action. Audit-ready by design, not by retrofit.

Why We Built This

We hit the limits ourselves.

Not capability limits — accountability limits.

Our own AI agents were powerful, productive, and completely ungoverned. When we couldn't answer "what did the agent do at 2 AM and who authorized it?" — we knew this had to exist. So we built it. Not a diagram. A working system, governed 24/7, in production.

Let's talk.

Whether you're exploring AI governance, planning an enterprise pilot, or looking for a design partner — we'd love to hear from you.

In private preview. Building with early partners.

Your information is confidential and secure. We will never sell or misuse it.

Follow the thinking

We're building AI governance in public. New posts on architecture, enforcement, and what we're learning along the way.

No spam. Governance-grade email only.

Models will change. Agents will restart. Vendors will come and go. The question is whether your rules, your knowledge, and your audit trail survive the transition.

Change is inevitable.

Drift is optional.